Optimising a Pipelined RISC-V Core: From Naive Pipeline to Near-Superscalar Performance

This post walks through the complete optimization journey of a single-issue pipelined RV32I core, from a plain five-stage implementation all the way to a version that runs within 2.3% of a superscalar design on CoreMark. Every number here comes from actual simulation runs on my own implementation. This is not a theoretical survey – it is what happened, step by step, with real cycle counts.

Before getting into the details, a disclaimer worth repeating throughout: modern high-performance processors like those from ARM, Intel, or Apple are not just superscalar, they are wide out-of-order superscalar with speculative execution, branch prediction trained on billions of cycles of silicon, register renaming, reorder buffers, and execution units that would make what is described here look like a toy. That context matters. The goal here was never to match a Cortex-A or an M-series core. The goal was to understand what headroom exists in a single-issue pipeline, and how far it can be pushed before the fundamental architectural limit of issuing one instruction per cycle becomes the ceiling.

What is RISC-V

RISC-V is an open instruction set architecture. Unlike ARM or x86, it has no licensing cost and no proprietary extensions that require legal agreements. The spec is public. Anyone can implement it.

RISC-V follows the reduced instruction set computing philosophy. Instructions are regular in format, memory access is only through explicit load and store instructions, and the register file is central to all computation. There is no implicit state being shuffled between operations the way x86 does with flags embedded inside complex instructions.

The base integer ISA is RV32I. The 32 means 32-bit address space and 32-bit data, and the I means the base integer instruction set. This gives you arithmetic, logical operations, shifts, loads, stores, branches, and jumps. That is enough to run real software.

A few example RV32I instructions to make the encoding tangible:

add x3, x1, x2 # x3 = x1 + x2

lw x5, 0(x6) # x5 = Memory[x6 + 0]

sw x5, 4(x6) # Memory[x6 + 4] = x5

beq x1, x2, label # if x1 == x2, jump to label

jal x1, func # x1 = PC+4, jump to func

All instructions are 32 bits wide and fixed format, which makes decode logic straightforward compared to variable-length ISAs.

The M Extension

RV32I alone has no multiply or divide hardware. For context: CoreMark, the benchmark used throughout this project, does multiplication. Running CoreMark on a pure RV32I implementation means the compiler has to synthesize multiplication out of shifts and additions, which is expensive in cycle count. Adding the M extension gives hardware multiply and divide instructions. This was included in the implementation so that CoreMark runs with real hardware multiply, not software emulation. When the implementation is described as RV32I throughout but runs CoreMark well, the M extension is implicitly included.

Without M extension, the compiler emits something like this for a 32-bit multiply:

# software multiply: x1 * x2 -> x3 (simplified)

# requires a loop of shifts and conditional adds

# easily 10-20 instructions for a full 32x32 multiply

With the M extension, it collapses to a single instruction:

mul x3, x1, x2 # x3 = x1 * x2 (one cycle, hardware)

The difference in instruction count and cycle cost for a benchmark that multiplies in an inner loop is significant.

The Processor Design Landscape

Before getting into pipeline optimisation specifically, it helps to understand where a pipelined core sits among the broader space of processor designs. These are not just academic categories – each represents a real tradeoff between implementation complexity, clock frequency, throughput, and area.

Single-Cycle

The simplest possible design. Every instruction completes in exactly one clock cycle. The clock period must be long enough for the slowest instruction to finish – which means the entire datapath, from instruction memory read through register file read, ALU computation, data memory access, and register write, must all complete within one cycle.

The problem is that the clock period is determined by the worst case. A load instruction touches instruction memory, the register file, the ALU, data memory, and then writes back. An ADD instruction only needs the register file and ALU. In a single-cycle design, ADD still takes the same time as a load because the clock period is sized for the load. Most of the cycle, the ADD datapath is idle. This is deeply wasteful.

Single-cycle timing (all instructions share the worst-case period):

ADD: [RF read][ ALU ][ idle ] <- wasting time

LW: [RF read][ ALU ][ MEM read ][ RF write] <- determines clock period

<------------- T_clock ------------------>

Multi-Cycle

Multi-cycle designs break instruction execution into steps and allow each instruction to take as many cycles as it actually needs. A load takes five steps, an ADD takes four, a branch takes three. The clock period is now sized for the longest single step, not the longest complete instruction. This allows a faster clock compared to single-cycle.

The tradeoff is control complexity. A finite state machine governs which step the processor is in for any given instruction, and different instructions have different step sequences. There is also only one datapath reused across steps, which means registers are needed to hold intermediate results between steps. Designing and verifying this state machine is significantly more work than a single-cycle datapath, and the processor still only works on one instruction at a time.

Multi-cycle state machine (simplified):

IF -> ID -> EX -> MEM -> WB (load: 5 cycles)

IF -> ID -> EX -> WB (add: 4 cycles)

IF -> ID -> EX (branch: 3 cycles)

Clock period = max(T_IF, T_ID, T_EX, T_MEM, T_WB)

< T_single_cycle (shorter, faster clock)

Pipelined – 3, 5, 7 Stages

Pipelining takes the multi-cycle insight and adds one more: while one instruction is in step 2, the hardware for step 1 is sitting idle. A new instruction can start step 1 in that same cycle. In steady state, the pipeline has multiple instructions in different stages simultaneously, one per stage, all progressing in lockstep each clock cycle.

The classic five-stage pipeline (IF, ID, EX, MEM, WB) is what most architecture courses use as the baseline because it balances stage count against implementation complexity well.

5-stage pipeline in steady state:

Cycle: 1 2 3 4 5 6 7

I1: [IF] [ID] [EX] [MM] [WB]

I2: [IF] [ID] [EX] [MM] [WB]

I3: [IF] [ID] [EX] [MM] [WB]

I4: [IF] [ID] [EX] [MM] [WB]

Throughput: 1 instruction retired per cycle (ideal)

Three-stage pipelines appear in small embedded cores (ARM Cortex-M0, for example) where area is the primary constraint. Fewer stages means simpler hazard handling, smaller silicon footprint, and lower power, at the cost of a slower clock frequency since each stage has more work to do.

Seven-stage and deeper pipelines allow each stage to contain less combinational logic, which means the critical path per stage is shorter and the clock can run faster. But deeper pipelines have higher branch misprediction penalties (more stages in flight to flush on a wrong prediction), more complex forwarding paths since results take longer to propagate back to earlier stages, and more pipeline registers. Whether the frequency gain outweighs the penalty cost depends heavily on the workload.

Branch misprediction flush cost vs pipeline depth:

3-stage: flush 1-2 instructions on mispredict

5-stage: flush 2-3 instructions on mispredict

7-stage: flush 4-5 instructions on mispredict

20-stage: flush 15-18 instructions on mispredict (Pentium 4 territory)

Superpipelining

Superpipelining is the strategy of increasing pipeline depth aggressively to chase clock frequency. The idea is to keep subdividing stages into finer and finer steps – 10, 12, 20 stages – so that the work per stage is minimal and the clock period becomes very short. The processor runs at a very high frequency as a result.

The Pentium 4’s NetBurst architecture went to 20 stages (and later 31 in Prescott) specifically to hit multi-GHz frequencies at a time when competitors were running slower clocks with shorter pipelines. It worked in terms of raw frequency, but the branch misprediction penalty grew to 15-20 cycles, and workloads with irregular control flow suffered badly. The frequency advantage did not always translate to real-world performance gains.

Superpipelining is fundamentally about getting more clock cycles per second out of the same logic by doing less work per cycle. It is a time-domain optimisation: squeeze the clock period down by reducing the amount of combinational logic each stage has to evaluate.

Superpipelining: split each stage into sub-stages

Normal EX stage (one cycle):

[reg_read -> alu_op -> alu_result] T = ~3ns, f_max ~333MHz

Superpipelined EX split into 3 sub-stages:

[reg_read] -> [alu_op] -> [alu_result] T = ~1ns each, f_max ~1GHz

More cycles per second, but each instruction takes more cycles to complete.

Penalty for a misprediction triples along with it.

Superscaling

Superscaling (superscalar) is a different axis entirely. Instead of doing less work per clock cycle to run faster, a superscalar processor does more work per clock cycle by issuing multiple instructions simultaneously. A dual-issue superscalar fetches, decodes, and executes two instructions per cycle. Four-wide superscalar does four.

Where superpipelining asks “how do we make each cycle shorter”, superscaling asks “how do we get more done in each cycle”. They are orthogonal strategies, and real high-performance processors often combine both – a wide superscalar core running at high frequency with a moderately deep pipeline.

Single-issue vs dual-issue throughput (ideal, no hazards):

Single-issue:

Cycle 1: I1 enters EX

Cycle 2: I2 enters EX -> 1 instruction/cycle

Dual-issue superscalar:

Cycle 1: I1 + I2 enter EX simultaneously

Cycle 2: I3 + I4 enter EX simultaneously -> 2 instructions/cycle

But this only works when I1 and I2 have no dependency between them:

add x1, x2, x3 # I1

add x4, x1, x5 # I2 -- reads x1, written by I1 -- cannot dual-issue

The hardware cost of superscaling is significant. The fetch unit must retrieve and align multiple instructions. The decode unit must handle all of them and identify dependencies between them. The issue logic must check whether each pair of instructions can safely execute in parallel, which means evaluating all inter-instruction dependencies for every combination of slots being considered. The register file needs more read ports. The forwarding network grows non-linearly with issue width because every execution unit’s result potentially needs to be forwarded to every other execution unit’s input. Verifying all of this correctly is substantially harder than a single-issue pipeline.

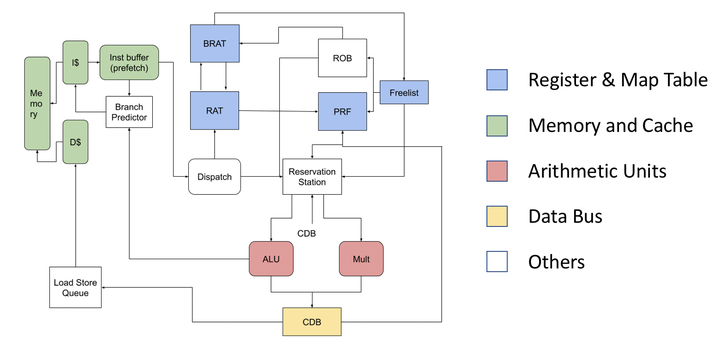

Out-of-Order Execution

Both superpipelining and basic superscalar designs are in-order: instructions are issued strictly in program order. Out-of-order (OOO) execution relaxes this constraint. Rather than stalling all issue slots when one instruction is waiting for a cache miss or a long-latency operation, an OOO core looks ahead into a window of upcoming instructions and issues any instruction whose operands are already ready, regardless of whether earlier instructions have completed.

This requires a reorder buffer to track in-flight instructions and ensure they commit to architectural state in program order (so exceptions still work correctly), a physical register file larger than the architectural register file to hold speculative results from instructions that have not yet committed, and a scheduler that tracks operand readiness across all in-flight instructions. Modern high-performance cores from Apple, AMD, and ARM are all wide OOO superscalar with hundreds of instructions in flight at any given moment.

OOO execution example:

Program order: lw x1, 0(x2) <- cache miss, stalls 100 cycles

add x3, x1, x4 <- depends on x1, must wait

add x5, x6, x7 <- no dependency on x1 at all

In-order processor: stalls at lw, add x5 waits 100 cycles doing nothing

OOO processor: issues add x5 while waiting for lw to complete

retires them in program order regardless

Amdahl’s Law and Pipeline Speedup

Amdahl’s Law gives a formal bound on the speedup achievable when only a fraction of a system is improved:

$$S = \frac{1}{(1 - p) + \frac{p}{n}}$$where $p$ is the fraction of execution time affected by the improvement and $n$ is the speedup factor applied to that fraction. As $n \to \infty$, the ceiling becomes $\frac{1}{1-p}$. The unaffected fraction $(1-p)$ is the hard limit.

Each design point above represents a different attempt to shrink $(1-p)$. Pipelining overlaps instruction execution across stages, but stalls from hazards and mispredictions reintroduce serial behaviour. Superscalar increases $n$ by issuing multiple instructions per cycle, but data dependencies cap $p$ because not all instruction pairs are independent. Deeper pipelines raise clock frequency but widen the misprediction penalty, adding back serial cycles through a different mechanism. The tradeoffs differ; the underlying constraint does not.

Where This Project Sits

I have explored several of these designs as part of a larger project on RV32IM processor implementations, covering single-cycle through pipelined and dual-issue superscalar variants, with notes on the tradeoffs encountered at each step. If you are interested in the broader comparison, the project lives at ../../projects/rose/rv32im/.

This post focuses specifically on the single-issue pipelined core and how far it can be pushed through microarchitectural optimisation. The superscalar variant exists and is used as a comparison point at the end, but the implementation details and tradeoffs of the superscalar design itself are covered separately in the project.

What a Pipeline Is

A processor pipeline breaks instruction execution into stages. Instead of one instruction completing fully before the next one starts, multiple instructions are in different stages of execution simultaneously, the way an assembly line works.

The classic five-stage pipeline for a RISC-style ISA is:

IF – Instruction Fetch. The program counter points to memory. The instruction at that address is fetched and loaded into a pipeline register. The PC is updated for the next cycle.

ID – Instruction Decode. The fetched instruction is decoded. The opcode tells you what operation to perform. The register specifiers tell you which registers to read. The register file is read here, and the immediate field is sign-extended. This is also where hazard detection happens in most baseline implementations.

EX – Execute. The ALU computes. For arithmetic, the result is produced here. For branches, the condition is evaluated. For load and store, the effective address is computed (base register plus offset).

MEM – Memory Access. Loads read from data memory. Stores write to data memory. Non-memory instructions pass through with their result.

WB – Write Back. The result, either from the ALU or from a load, is written back into the destination register.

This is the textbook model. In practice, every real design diverges from it in various ways, and understanding where and why is where the interesting engineering starts.

A simplified Verilog sketch of the pipeline register between IF and ID gives a sense of what “stage boundary” means in RTL:

// IF/ID pipeline register

always_ff @(posedge clk) begin

if (rst) begin

if_id_instr <= 32'h0000_0013; // NOP (addi x0, x0, 0)

if_id_pc <= 32'b0;

end else if (stall) begin

// hold current values -- bubble insertion on hazard

if_id_instr <= if_id_instr;

if_id_pc <= if_id_pc;

end else if (flush) begin

// squash the instruction -- wrong path fetch

if_id_instr <= 32'h0000_0013; // NOP

if_id_pc <= 32'b0;

end else begin

if_id_instr <= imem_rdata;

if_id_pc <= pc;

end

end

Every stage boundary has a register like this. The stall and flush signals are driven by the hazard and control units.

Why Pipelining Introduces Hazards

A pipeline assumes that when an instruction reaches the EX stage, its source operands are available. This breaks in two common ways.

Data hazards. If instruction N writes a register and instruction N+1 reads that same register, N+1 reaches the ID stage before N has written its result back to the register file. If you naively read the register file at ID, you get a stale value. The result of N does not reach WB until three cycles after ID, but N+1 needs it one cycle after N.

Control hazards. When a branch instruction is in the pipeline, the processor does not know yet whether to take the branch or fall through. It has to keep fetching instructions from somewhere. If it guesses wrong, those instructions must be squashed and the correct path fetched.

These are not minor edge cases. On real workloads, a significant fraction of instructions are branches, and load-use sequences (load followed immediately by an instruction that uses the loaded value) appear constantly in compiled code.

Data hazard -- RAW (Read After Write):

add x1, x2, x3 <- writes x1 at WB (cycle 5)

add x4, x1, x5 <- reads x1 at ID (cycle 3) -- x1 not ready yet!

Cycle: 1 2 3 4 5

I1: [IF] [ID] [EX] [MM] [WB] <- x1 written here

I2: [IF] [ID] [EX] [MM] <- x1 read here -- stale!

^

x1 needed here, but WB is 2 cycles away

Control hazard -- branch taken, wrong path fetched:

Cycle: 1 2 3 4 5

BEQ: [IF] [ID] [EX] ... <- branch resolves at end of EX (cycle 3)

I_seq1: [IF] [ID] [EX] <- fetched sequentially -- must squash

I_seq2: [IF] [ID] <- fetched sequentially -- must squash

Target: [IF] ... <- correct fetch starts cycle 4

2 wasted cycles (slots I_seq1 and I_seq2) every mispredicted or unknown branch.

Forwarding and Stalls: The Baseline Hazard Solution

The standard fix for data hazards is forwarding, also called bypassing. The insight is that the value is computed at the end of the EX stage. Even before it reaches WB and gets written to the register file, it is sitting in a pipeline register. If the next instruction needs it, you can wire that pipeline register output directly to the ALU input instead of waiting for the register file write.

This handles most data hazards with no stall. The exception is the load-use hazard. A load instruction does not produce its result until the end of the MEM stage, one stage later than an ALU instruction. If the very next instruction needs the loaded value, even with forwarding you need to stall one cycle, because the value simply is not available yet when EX starts for the following instruction.

The baseline implementation inserts a bubble (a stall cycle) whenever it detects a load-use hazard.

Forwarding paths in a standard 5-stage pipeline:

EX -> EX forwarding (1-cycle gap):

add x1, x2, x3 [EX produces x1]

add x4, x1, x5 [EX needs x1 -- forward from EX/MEM register]

MEM -> EX forwarding (2-cycle gap):

add x1, x2, x3 [MEM stage, result in MEM/WB register]

nop

add x4, x1, x5 [EX needs x1 -- forward from MEM/WB register]

Load-use -- forwarding cannot help (1-cycle stall required):

lw x1, 0(x2) [MEM reads memory -- result not ready until end of MEM]

add x4, x1, x5 [EX starts same cycle MEM is reading -- too early]

-> insert stall bubble

A forwarding unit in Verilog checks these conditions and selects the right source for each ALU input:

// EX stage forwarding mux for operand A (rs1)

always_comb begin

if (ex_mem_regwrite && (ex_mem_rd == id_ex_rs1) && (id_ex_rs1 != 0))

forward_a = 2'b10; // forward from EX/MEM (1-cycle gap)

else if (mem_wb_regwrite && (mem_wb_rd == id_ex_rs1) && (id_ex_rs1 != 0))

forward_a = 2'b01; // forward from MEM/WB (2-cycle gap)

else

forward_a = 2'b00; // use register file value

end

// ALU input A selected based on forwarding

assign alu_in_a = (forward_a == 2'b10) ? ex_mem_alu_result :

(forward_a == 2'b01) ? wb_data :

id_ex_rs1_val;

The hazard detection logic at the baseline level is conservative. It checks: is the instruction in the EX stage a load, and does the instruction in the ID stage read any register that the load writes? If yes, stall. The problem with this is that the condition is checked based purely on register numbers, not on whether the ID-stage instruction actually uses those registers in a meaningful way. Some instructions encode a register field that the instruction does not actually read. A conservative check stalls unnecessarily in those cases.

// Baseline (conservative) load-use hazard detection

assign load_use_hazard = (id_ex_memread) &&

((id_ex_rd == if_id_rs1) ||

(id_ex_rd == if_id_rs2));

// Problem: checks rs1 and rs2 fields by position in encoding,

// not whether the instruction in ID actually reads those registers.

// LUI, AUIPC, JAL have rs1/rs2 fields that are not used -- false stalls.

Metrics: How to Compare Pipeline Variants

Before describing any optimization, it helps to be precise about what is being measured and what the numbers mean.

Cycles. Raw clock count to complete a workload. Lower is better, but it only makes sense to compare cycles across implementations running the same binary on the same problem.

Retired instructions. The count of instructions that actually completed and committed their result. Flushed instructions from mispredictions do not count.

CPI – Cycles Per Instruction. Cycles divided by retired instructions. This tells you on average how many cycles each instruction took. A perfect pipeline with no hazards and no mispredictions would have a CPI of 1.0 for a single-issue design. Real CPIs are higher. Lower CPI means the pipeline is being used more efficiently.

IPC – Instructions Per Cycle. The reciprocal of CPI. Retired instructions divided by cycles. A single-issue pipeline has a theoretical maximum IPC of 1.0. Superscalar designs can exceed 1.0 because they issue multiple instructions per cycle.

CoreMark/MHz. CoreMark is a standardized benchmark that exercises integer operations, control flow, and memory access in a way that is meant to represent embedded workloads. Dividing the score by the clock frequency in MHz gives a frequency-independent figure that represents how much work the processor gets done per million clock cycles. This is the main comparison metric throughout this project because it normalizes out frequency, which is an implementation-technology concern rather than a microarchitecture concern.

The formula used:

CoreMark/MHz = (ITERATIONS * 1,000,000) / cycles

Example with baseline numbers:

ITERATIONS = 100

cycles = 38,877,313

CoreMark/MHz = (100 * 1,000,000) / 38,877,313

= 100,000,000 / 38,877,313

= 2.572194225

CPI = cycles / retired_instructions

= 38,877,313 / 29,543,xyz (retired instr count from simulation)

= 1.315809553

IPC = 1 / CPI = 1 / 1.315809553 = 0.7599...

All numbers in this post are for -O3 optimization and 100 iterations, which gives a long enough run that startup overhead is negligible and the numbers are stable. CRC checks were verified consistent across all runs.

riscv32-unknown-elf-gcc -O3 -ffreestanding -fno-builtin -march=rv32im -mabi=ilp32 -nostdlib -Ttext 0x0 \

-I scripts/coremark -DITERATIONS=100 -DVALIDATION_RUN=1 -DTOTAL_DATA_SIZE=2000 \

-o coremark_i100_o3.elf \

scripts/coremark/crt0.S \

scripts/coremark/core_main.c \

scripts/coremark/core_list_join.c \

scripts/coremark/core_matrix.c \

scripts/coremark/core_state.c \

scripts/coremark/core_util.c \

scripts/coremark/core_portme.c \

-lgcc

python3 scripts/elf2hex.py coremark_i100_o3.elf hex/inst_mem.hex hex/data_mem.hex

make verilator-prog

./obj_dir/Vtb_program

cat tb_program_results.txt

cp tb_program_results.txt tb_program_results_i100_o3.txt

python3 - <<'PY'

import re

def parse(path):

txt = open(path).read()

cycles = int(re.search(r"Total cycles:\s*(\d+)", txt).group(1))

inst = int(re.search(r"Retired instructions:\s*(\d+)", txt).group(1))

return cycles, inst

runs = [

("O2", 1, "tb_program_results_i1_o2.txt"),

("O2", 10, "tb_program_results_i10_o2.txt"),

("O2", 100, "tb_program_results_i100_o2.txt"),

("O3", 1, "tb_program_results_i1_o3.txt"),

("O3", 10, "tb_program_results_i10_o3.txt"),

("O3", 100, "tb_program_results_i100_o3.txt"),

("Ofast", 1, "tb_program_results_i1_ofast.txt"),

("Ofast", 10, "tb_program_results_i10_ofast.txt"),

("Ofast", 100, "tb_program_results_i100_ofast.txt"),

]

for opt, iters, path in runs:

cycles, inst = parse(path)

cpi = cycles / inst

ipc = inst / cycles

cm_mhz = (iters * 1_000_000) / cycles

print(f"{opt} ITER={iters}: cycles={cycles}, inst={inst}, CPI={cpi:.9f}, IPC={ipc:.9f}, CoreMark/MHz={cm_mhz:.9f}")

def cm(opt, it):

path = {

("O2", 1): "tb_program_results_i1_o2.txt",

("O2", 10): "tb_program_results_i10_o2.txt",

("O2", 100): "tb_program_results_i100_o2.txt",

("O3", 1): "tb_program_results_i1_o3.txt",

("O3", 10): "tb_program_results_i10_o3.txt",

("O3", 100): "tb_program_results_i100_o3.txt",

("Ofast", 1): "tb_program_results_i1_ofast.txt",

("Ofast", 10): "tb_program_results_i10_ofast.txt",

("Ofast", 100): "tb_program_results_i100_ofast.txt",

}[(opt, it)]

cycles, _ = parse(path)

return (it * 1_000_000) / cycles

for it in (1, 10, 100):

print(f"ITER={it} O3 vs O2 improvement: {(cm('O3', it)/cm('O2', it) - 1) * 100:.3f}%")

print(f"ITER={it} Ofast vs O2 improvement: {(cm('Ofast', it)/cm('O2', it) - 1) * 100:.3f}%")

print(f"ITER={it} Ofast vs O3 delta: {(cm('Ofast', it)/cm('O3', it) - 1) * 100:.3f}%")

for opt in ("O2", "O3", "Ofast"):

print(f"{opt} ITER=100 vs ITER=10 delta: {(cm(opt, 100)/cm(opt, 10) - 1) * 100:.3f}%")

PY

The Starting Point: Plain Five-Stage Pipeline

The baseline implementation is a straightforward five-stage pipeline with forwarding and stall-on-load-use. There is no branch prediction. When a branch is encountered, the pipeline stalls or flushes based on the branch outcome, which is only known at the end of the EX stage.

Baseline result at O3, 100 iterations:

- Cycles: 38,877,313

- CPI: 1.315809553

- CoreMark/MHz: 2.572194225

A CPI of 1.316 means on average every instruction takes 1.316 cycles. The overhead above 1.0 is coming from two main sources: branch penalties (cycles wasted fetching down the wrong path or stalling while the branch resolves) and load-use stalls (cycles wasted waiting for load results).

This is the floor. Everything that follows is an attempt to push that CPI closer to 1.0.

CPI breakdown (conceptual) for baseline:

Ideal CPI (no hazards): 1.000

+ load-use stall contribution: ~0.10

+ branch penalty contribution: ~0.21

-------

Observed CPI: ~1.316

Every 0.001 reduction in CPI on this workload saves ~30,000 cycles.

Step 1: Moving Branch Resolution Earlier

In the baseline, branches are resolved at the end of the EX stage. The branch condition is computed by the ALU, the result comes out, and only then does the processor know whether to take the branch or not. Meanwhile, two instructions after the branch have already entered the pipeline. If the branch is taken, those two instructions must be squashed. That is a two-cycle penalty every time a branch is taken and was not predicted taken.

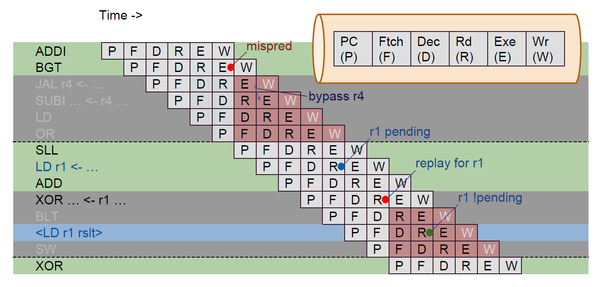

The fix is to move branch resolution to the ID stage. Instead of using the main ALU in EX, add a small comparator in ID that can evaluate branch conditions using the register values read during ID. This means the branch outcome is known one stage earlier, which cuts the worst-case penalty from two cycles to one.

To make this work, you also need operand forwarding specifically for the branch comparator in ID. If one of the branch operands is the result of the immediately preceding instruction (which is in EX when the branch is in ID), you need to forward that value into the comparator.

The pipeline also needs a redirect path: if the decision made in ID differs from what the fetch stage assumed (which in the baseline, with no prediction, is always sequential), the fetch stage must be redirected to the correct target.

Branch in EX (baseline) -- 2-cycle penalty:

Cycle: 1 2 3 4 5

BEQ: [IF] [ID] [EX] <- resolves at end of cycle 3

I+1: [IF] [ID] <- flush <- wrong path, squash

I+2: [IF] <- flush <- wrong path, squash

Target: [IF] ... <- correct fetch starts cycle 4

Penalty: 2 bubbles

Branch in ID (optimised) -- 1-cycle penalty:

Cycle: 1 2 3 4

BEQ: [IF] [ID] <- resolves at end of cycle 2

I+1: [IF] <- flush <- wrong path, squash

Target: [IF] ... <- correct fetch starts cycle 3

Penalty: 1 bubble

The ID-stage comparator for a BEQ branch looks something like this in RTL:

// Branch comparator in ID stage

// forward from EX stage if the previous instruction wrote the branch source

wire [31:0] br_rs1 = (ex_rd_valid && ex_rd == id_rs1) ? ex_alu_result : id_rs1_val;

wire [31:0] br_rs2 = (ex_rd_valid && ex_rd == id_rs2) ? ex_alu_result : id_rs2_val;

wire branch_taken;

always_comb begin

case (id_funct3)

3'b000: branch_taken = (br_rs1 == br_rs2); // BEQ

3'b001: branch_taken = (br_rs1 != br_rs2); // BNE

3'b100: branch_taken = ($signed(br_rs1) < $signed(br_rs2)); // BLT

3'b101: branch_taken = ($signed(br_rs1) >= $signed(br_rs2)); // BGE

3'b110: branch_taken = (br_rs1 < br_rs2); // BLTU

3'b111: branch_taken = (br_rs1 >= br_rs2); // BGEU

default: branch_taken = 1'b0;

endcase

end

wire [31:0] branch_target = id_pc + id_imm_b; // PC-relative target

Result after early branch resolution:

- Cycles: 38,877,313 to 35,812,817

- CPI: 1.315809553 to 1.212091142

- CoreMark/MHz: 2.572194225 to 2.792296400

- Improvement: +8.557%

This is the single largest gain in the entire optimization journey. Moving branch resolution one stage earlier, with the correct forwarding logic to support it, gave over 8.5% improvement. Branch penalties are expensive, and this addresses them at the source.

In Amdahl’s terms, branch penalties represent a portion of $(1-p)$: cycles spent doing no useful work that reduce the effective parallelism of the pipeline. Moving resolution to ID converts a two-cycle serial penalty into a one-cycle penalty, directly increasing $p$ for the workload. At 8.557%, this is the largest single reduction in the serial fraction across all optimisations, which is consistent with branch instructions comprising a large share of dynamic instruction count in CoreMark’s inner loops.

Step 2: Branch Prediction – Direction Prediction

Early branch resolution helps, but it does not eliminate the penalty. The pipeline still does not know the branch outcome until ID. In between IF and ID, the processor fetched one instruction sequentially. If the branch is taken, that instruction must be squashed, costing one cycle.

Branch prediction is the mechanism that guesses the branch outcome before it is known, so the fetch stage can speculatively fetch from the predicted path. A correct prediction eliminates the penalty entirely. An incorrect prediction incurs a flush, which is slightly more expensive than the baseline because now there are instructions from the wrong path in the pipeline.

Three direction predictor types were evaluated before committing to one for the main optimization line:

Static backward-taken. Loops branch backward (the target is a lower address than the branch itself), and loops are frequent. A static rule that predicts backward branches as taken and forward branches as not-taken is simple and requires no state, but it works well for loops and costs nothing in terms of storage or update logic.

// Static backward-taken predictor -- no state, pure combinational

wire predict_taken = (branch_target < if_pc); // backward = taken

1-bit dynamic. Each branch has a one-bit entry in a table indexed by the branch’s PC. The bit records whether the branch was taken last time. Prediction is: do the same as last time. When a branch alternates (taken, not-taken, taken, not-taken), this mispredicts every single time. This happens at loop exits: the loop runs many times (taken), then exits once (not-taken). The 1-bit predictor flips to not-taken, then the loop starts again and mispredicts the first iteration.

// 1-bit predictor (BHT = Branch History Table)

localparam BHT_ENTRIES = 256;

reg bht [0:BHT_ENTRIES-1]; // 1 bit per entry

wire [7:0] bht_idx = if_pc[9:2]; // index by PC bits

wire predict_taken = bht[bht_idx];

// Update on branch resolution

always_ff @(posedge clk) begin

if (branch_resolved)

bht[resolved_pc[9:2]] <= actual_taken; // flip to actual outcome

end

// Problem: loop runs 100x (taken), exits (not-taken):

// bht flips to 0, next iteration of loop mispredicts immediately

2-bit saturating counter. Each entry is a two-bit counter: strongly not-taken, weakly not-taken, weakly taken, strongly taken. A single misprediction does not immediately flip the prediction. This adds hysteresis. A loop that exits once after running 100 iterations: the counter was in the strongly-taken state, one not-taken outcome moves it to weakly taken, not immediately flipping to not-taken. The next time the loop starts, the counter is still in a taken state and predicts correctly. The loop exit will mispredict once, but the loop body predicts correctly throughout. This is better than 1-bit in almost every scenario that involves loops.

// 2-bit saturating counter predictor

localparam BHT_ENTRIES = 256;

reg [1:0] bht [0:BHT_ENTRIES-1]; // 2 bits: 00=SN, 01=WN, 10=WT, 11=ST

wire [7:0] bht_idx = if_pc[9:2];

wire predict_taken = bht[bht_idx][1]; // MSB is the prediction

// Update: saturating increment/decrement

always_ff @(posedge clk) begin

if (branch_resolved) begin

if (actual_taken)

bht[resolved_pc[9:2]] <= (bht[resolved_pc[9:2]] == 2'b11) ?

2'b11 : bht[resolved_pc[9:2]] + 1;

else

bht[resolved_pc[9:2]] <= (bht[resolved_pc[9:2]] == 2'b00) ?

2'b00 : bht[resolved_pc[9:2]] - 1;

end

end

// Loop exit behaviour:

// counter at 11 (ST), branch not-taken at exit -> goes to 10 (WT)

// next loop iteration: counter still says taken -> correct prediction

// one mispredict at exit, not two like 1-bit would cause

Results of the predictor sweep (on the pipeline with early branch resolution but before BTB and load-use work):

| Predictor | Cycles | CoreMark/MHz |

|---|---|---|

| Static backward-taken | 35,390,851 | 2.825589020 |

| 1-bit dynamic | 34,640,813 | 2.886768275 |

| 2-bit saturating | 34,185,095 | 2.925251488 |

The 2-bit predictor won, so the main optimization line continued with it.

Combining early branch resolution with the 2-bit predictor:

- Cycles: 35,812,817 to 33,466,269

- CPI: 1.212091142 to 1.132671809

- CoreMark/MHz: 2.792296400 to 2.988083315

- Improvement over previous: +6.547%

Step 3: The Branch Target Buffer

Direction prediction tells you whether a branch will be taken or not. But that is only half the problem. If you predict a branch as taken, you also need to know where to fetch from, which means you need the branch target address.

In the baseline, the branch target is computed in the ID stage by adding the PC to the immediate offset. This means even a correctly-predicted-taken branch still forces the pipeline to fetch from the sequential PC during the one cycle the branch is in IF but has not yet been decoded. When the decoder identifies the target address in ID, it redirects the fetch unit. One wasted cycle, every time a taken branch is encountered, even when the direction is predicted correctly.

The Branch Target Buffer (BTB) fixes this. It is a small table indexed by the current PC. When an instruction is fetched, the BTB is looked up simultaneously. If the current PC matches an entry, the BTB provides the predicted target address and the fetch unit can immediately start fetching from there on the next cycle – before the branch even reaches the decode stage.

Practically, the BTB is a direct-mapped or set-associative cache of (PC, target address) pairs. When a branch is first executed, its target is recorded in the BTB. On subsequent encounters of the same branch, the fetch unit predicts the target from the BTB without waiting for the decode stage to compute it.

The direction predictor and BTB work together. The direction predictor says taken or not-taken. If taken, the BTB provides the target. If both are correct, the branch has zero penalty. If either is wrong, there is a flush and redirect back to the correct path.

Without BTB (direction predicted, target unknown until ID):

Cycle: 1 2 3

PC=100 [IF: BEQ] [ID: compute target=200, redirect]

PC=104 [IF: I+1] <- squash (wrong path, fetched sequentially)

PC=200 [IF: target] <- correct fetch, 1 wasted cycle even on correct prediction

With BTB (target cached from prior execution):

Cycle: 1 2

PC=100 [IF: BEQ, BTB->200] [IF: target at 200] <- zero penalty on hit

^

BTB lookup happens in same cycle as fetch

next PC = 200 immediately if direction says taken

A direct-mapped BTB implementation:

// Direct-mapped BTB: 256 entries, indexed by PC[9:2]

localparam BTB_ENTRIES = 256;

reg [31:0] btb_target [0:BTB_ENTRIES-1];

reg [21:0] btb_tag [0:BTB_ENTRIES-1]; // upper PC bits for validation

reg btb_valid [0:BTB_ENTRIES-1];

wire [7:0] btb_idx = if_pc[9:2];

wire [21:0] btb_tag_in = if_pc[31:10];

// BTB lookup (combinational, runs in parallel with instruction fetch)

wire btb_hit = btb_valid[btb_idx] && (btb_tag[btb_idx] == btb_tag_in);

wire [31:0] btb_predicted_target = btb_target[btb_idx];

// Next PC logic: use BTB target if hit and direction predictor says taken

wire [31:0] next_pc = (btb_hit && predict_taken) ? btb_predicted_target :

(redirect_from_id) ? redirect_target :

if_pc + 4;

// Update BTB when a branch resolves

always_ff @(posedge clk) begin

if (branch_resolved && actual_taken) begin

btb_target[resolved_pc[9:2]] <= actual_target;

btb_tag [resolved_pc[9:2]] <= resolved_pc[31:10];

btb_valid [resolved_pc[9:2]] <= 1'b1;

end

end

Result after adding BTB:

- Cycles: 33,466,269 to 32,347,569

- CPI: 1.132671809 to 1.094809209

- CoreMark/MHz: 2.988083315 to 3.091422419

- Improvement over previous: +3.458%

Step 4: Hazard Optimisation – Removing False Load-Use Stalls

The baseline hazard detection logic stalls whenever the instruction in EX is a load and the instruction in ID has any register field that matches the load’s destination. The problem is that it checks register numbers without checking whether the ID-stage instruction actually reads those registers.

In RV32I, many instructions have register fields in their encoding even when the instruction does not semantically use that field. Store instructions, for example, use rs2 as their data source but the baseline might check rs1 as well. Upper immediate instructions (LUI, AUIPC) have no source registers at all but still have bit fields at the rs1 position in the encoding. The hazard logic, checking purely on register numbers, generates a stall that is not actually necessary.

The fix is source-aware hazard detection. The decoder produces explicit signals: use_rs1 and use_rs2, one bit each, indicating whether the instruction actually reads rs1 and rs2. The hazard detection logic only triggers a stall when the load destination matches a register that the ID-stage instruction actually uses.

// Baseline: checks register fields blindly

assign load_use_hazard = id_ex_memread &&

((id_ex_rd == if_id_rs1) || (id_ex_rd == if_id_rs2));

// Optimised: decoder tells us which registers are actually read

wire use_rs1, use_rs2;

// Example decoder output signals

assign use_rs1 = (opcode == OP_REG) || // R-type

(opcode == OP_IMM) || // I-type ALU

(opcode == OP_LOAD) || // loads (base addr)

(opcode == OP_STORE) || // stores (base addr)

(opcode == OP_BRANCH) || // branches

(opcode == OP_JALR); // jalr

assign use_rs2 = (opcode == OP_REG) || // R-type

(opcode == OP_STORE) || // stores (data)

(opcode == OP_BRANCH); // branches

// LUI, AUIPC, JAL: use_rs1=0, use_rs2=0 -- no false stalls

assign load_use_hazard = id_ex_memread &&

((use_rs1 && (id_ex_rd == if_id_rs1)) ||

(use_rs2 && (id_ex_rd == if_id_rs2)));

Result:

- Cycles: 32,347,569 to 32,346,765

- CoreMark/MHz: +0.002%

The gain here is tiny. False positive load-use hazards are rare in the CoreMark binary at O3. The optimization is still correct to make (false stalls are wasteful and technically a design error), but this is not a significant performance driver for this workload.

Step 5: Load-Use Relaxation for Store Data

A true load-use hazard is when a load is immediately followed by an instruction that needs the loaded value. The result has to come from memory before it can be used. There is no way around this without changing the forwarding path.

But one specific case can be relaxed. Consider this sequence:

lw x1, 0(x2) # load into x1

sw x1, 4(x3) # store x1 to memory

The store instruction reads x1 for the data to write. In the baseline pipeline, this triggers a load-use stall. But the store data does not need to be available in the ID stage or even at the start of EX. The store address (computed from x3 and the offset) is produced in EX. The store data (x1) is written to memory in the MEM stage. If you add a forwarding path from the MEM stage output of the first instruction to the MEM stage data input of the second instruction, you can allow the store to proceed without stalling, forwarding the load result at the point it is actually needed.

Without store-data forwarding:

Cycle: 1 2 3 4 5 6 7

LW x1: [IF] [ID] [EX] [MM] [WB]

SW x1: [IF] [ID] [--] [EX] [MM] [WB] <- stall bubble at ID

^

stall inserted here -- x1 not ready

With MEM->MEM forwarding for store data:

Cycle: 1 2 3 4 5 6

LW x1: [IF] [ID] [EX] [MM] [WB]

SW x1: [IF] [ID] [EX] [MM] [WB] <- no stall

^

x1 forwarded from LW's MEM output

to SW's MEM data input at this point

// MEM-stage store data forwarding

// If the previous instruction was a load writing rd,

// and current instruction is a store reading rs2 (data),

// forward the load result directly to the store data port.

wire mem_mem_store_fwd = mem_wb_memread &&

(mem_wb_rd == ex_mem_rs2) &&

(ex_mem_opcode == OP_STORE);

wire [31:0] store_data = mem_mem_store_fwd ? mem_wb_load_data :

ex_mem_rs2_val;

Result:

- Cycles: 32,346,765 to 32,342,681

- CoreMark/MHz: +0.013%

Again small, because this specific pattern (load immediately followed by a store using the loaded value) is not extremely common in the CoreMark binary at O3. But the forwarding path is correct and eliminates a class of unnecessary stalls.

Step 6: Generalised Load-Use Forwarding

The previous two steps were incremental. This step generalises the entire load-use forwarding strategy.

The core insight is this: a load-use stall is required only when the consuming instruction needs the load result in the ID stage. Which instructions need their operands in ID? In this implementation: branches (because branch resolution was moved to ID and the comparator reads register values there) and JALR (because its target computation uses a register value and is also resolved early).

For all other consumers – ALU instructions, stores, other loads – the operand is needed at the start of the EX stage. The load result comes out of the MEM stage. The question is: can you forward from MEM to EX?

Yes. With an additional forwarding mux at the EX stage inputs, the MEM-stage output can be fed directly to the EX stage of the following instruction. Load-use stalls are only inserted when the consuming instruction is a branch or JALR. For every other consumer, no stall is necessary because the MEM-to-EX forwarding path delivers the value exactly when it is needed.

This is called full load-use forwarding or generalised MEM-to-EX forwarding. The standard forwarding unit in a naive pipeline handles EX-to-EX (when the previous instruction produced its result in EX and the current one needs it in EX) and MEM-to-EX (for the two-instruction gap). Generalised forwarding adds the path for load results one cycle later than normal ALU results.

Without fulu -- stall on any load-use:

Cycle: 1 2 3 4 5 6

LW x1: [IF] [ID] [EX] [MM] [WB]

ADD x4: [IF] [ID] [--] [EX] [MM] <- stall bubble, x1 not ready at EX start

^

stall because x1 arrives at end of cycle 4 (MM),

but ADD needs it at start of cycle 4 (EX)

With fulu -- MEM->EX forwarding, no stall for ALU consumers:

Cycle: 1 2 3 4 5 6

LW x1: [IF] [ID] [EX] [MM] [WB]

ADD x4: [IF] [ID] [EX] [MM] [WB] <- no stall

^

x1 forwarded from LW's MM output to ADD's EX input

at the start of cycle 4 -- arrives just in time

// Generalised forwarding unit -- fulu additions

// Standard paths: EX->EX (fwd_a/b = 10), MEM->EX (fwd_a/b = 01)

// fulu adds: MEM_load->EX (one cycle later than normal MEM->EX)

// The critical distinction: only stall for ID-stage consumers (branches, JALR)

wire needs_in_id = (id_opcode == OP_BRANCH) || (id_opcode == OP_JALR);

// Load-use stall only when the consumer needs the value in ID

assign load_use_hazard = id_ex_memread &&

needs_in_id &&

((use_rs1 && id_ex_rd == if_id_rs1) ||

(use_rs2 && id_ex_rd == if_id_rs2));

// For EX-stage consumers, the MEM->EX forwarding path handles it:

// (this is the fulu forwarding mux extension)

always_comb begin

// Forward A

if (ex_mem_regwrite && ex_mem_rd != 0 && ex_mem_rd == id_ex_rs1)

forward_a = FWD_EX_MEM; // 1-cycle gap, ALU result

else if (mem_wb_regwrite && mem_wb_rd != 0 && mem_wb_rd == id_ex_rs1)

forward_a = FWD_MEM_WB; // 2-cycle gap, covers load result (fulu)

else

forward_a = FWD_NONE;

end

Result:

- Cycles: 32,342,681 to 31,709,661

- CPI: 1.094643774 to 1.073219100

- CoreMark/MHz: 3.091889630 to 3.153613027

- Improvement over previous: +1.996%

Nearly 2% from generalising the forwarding path. This is the most impactful hazard optimisation in the set.

Step 7: Tournament (Hybrid) Branch Predictor

After the load-use forwarding work, attention returned to branch prediction. A separate experiment line tracked global branch history predictors and hybrid approaches.

A local predictor (what was being used) indexes its prediction table by the branch’s own PC. The assumption is that a branch’s own history is the best predictor of its next outcome. This works well for loops, where the same branch runs many times and its own history is informative.

A global predictor indexes by a shift register of recent branch outcomes from any branch, not just the current one. The assumption is that correlations between different branches matter. If two branches in a function are strongly correlated – one being taken implies the other will be taken – a global predictor can capture that because the global history contains the outcome of the first branch when the second one is being predicted. A local predictor cannot see this.

A tournament predictor (also called a meta-predictor or chooser-based hybrid) maintains both a local and a global predictor, plus a chooser table that records which of the two was more accurate for each branch. The chooser itself is indexed by branch PC and updated: when the local predictor is right and the global predictor is wrong, the chooser moves toward preferring local; when the reverse happens, it moves toward global. At runtime, the chooser selects the prediction from whichever predictor has been more accurate for this particular branch.

Tournament predictor structure:

PC ──┬──> Local BHT ──> local_pred ──┐

│ ├──> [chooser] ──> final_pred

└──> Global BHT ──> global_pred ──┘ ^

(indexed by indexed by PC,

global history tracks which is better

shift register) per branch

Chooser update:

if local_pred correct, global wrong -> chooser[pc] += 1 (prefer local)

if global_pred correct, local wrong -> chooser[pc] -= 1 (prefer global)

if both right or both wrong -> chooser[pc] unchanged

// Tournament predictor (simplified)

localparam ENTRIES = 256;

reg [1:0] local_bht [0:ENTRIES-1]; // 2-bit local counters

reg [1:0] global_bht [0:ENTRIES-1]; // 2-bit global counters

reg [1:0] chooser [0:ENTRIES-1]; // 2-bit chooser: MSB=1 prefer local

reg [7:0] ghr; // global history register (8-bit)

wire [7:0] idx = if_pc[9:2];

wire [7:0] gbl_idx = ghr ^ if_pc[9:2]; // XOR index for global

wire local_pred = local_bht[idx][1];

wire global_pred = global_bht[gbl_idx][1];

wire use_local = chooser[idx][1];

wire predict_taken = use_local ? local_pred : global_pred;

// Update on resolution

always_ff @(posedge clk) begin

if (branch_resolved) begin

// update local

// update global with ghr XOR pc

// update ghr: shift in actual outcome

ghr <= {ghr[6:0], actual_taken};

// update chooser based on which was right

if (local_correct && !global_correct)

chooser[res_idx] <= sat_inc(chooser[res_idx]);

else if (global_correct && !local_correct)

chooser[res_idx] <= sat_dec(chooser[res_idx]);

end

end

Results of the predictor alternatives explored separately:

| Variant | Cycles | CoreMark/MHz |

|---|---|---|

| Global predictor | 34,709,649 | 2.881043251 |

| Tuned global | 34,527,599 | 2.896233822 |

| Hybrid/tournament | 34,021,025 | 2.939358823 |

These were explored on a standalone pipeline line. The tournament predictor was then substituted into the full optimised pipeline (replacing the 2-bit local predictor while keeping BTB, hazard optimisations, and full load-use forwarding):

- Cycles: 31,709,661 to 31,566,209

- CPI: 1.073219100 to 1.068363941

- CoreMark/MHz: 3.153613027 to 3.167944557

- Improvement over previous: +0.455%

Step 8: JAL Fast Path and Return Address Stack

Two specific control flow patterns that the branch predictor does not efficiently handle:

JAL (jump and link). This is an unconditional direct jump. It has no direction to predict – it always jumps. The BTB handles it by providing the target, but without special handling, the pipeline still fetches the sequential instruction after JAL during the one cycle JAL is in IF before the BTB lookup resolves. Adding a JAL fast path in the IF stage partially decodes the instruction’s opcode while it is being fetched and immediately computes the PC-relative target, eliminating the one-cycle fetch bubble that would otherwise occur.

JALR (jump and link register). This is an indirect jump whose target is a register value plus an offset. This is how function returns work in RISC-V: ret is jalr x0, 0(ra), which jumps to the address in the return address register. The BTB can predict the target if a function is always called from one place, but when a function is called from multiple call sites, the return address is different each time. The BTB entry for the ret instruction keeps getting overwritten with whatever the most recent return address was, and mispredicts constantly for any function called from more than one location.

Why BTB fails for returns from multi-callsite functions:

call site A: jal ra, foo <- return addr = A+4

call site B: jal ra, foo <- return addr = B+4

foo:

...

ret <- BTB entry for ret PC keeps alternating

between A+4 and B+4 depending on call order

-> mispredict on every return

RAS behaviour:

call from A: push A+4 onto RAS

foo executes

ret: pop A+4 from RAS -> correct, no mispredict

call from B: push B+4 onto RAS

foo executes

ret: pop B+4 from RAS -> correct, no mispredict

A Return Address Stack (RAS) is a small hardware stack dedicated to this purpose. Every time JAL or JAL-style call instructions execute, the return address (PC+4) is pushed onto the RAS. Every time JALR is identified as a return by matching the ret pattern (destination x0, source ra), the top of the RAS is popped and used as the predicted target. Since calls and returns are naturally paired – every return corresponds to a prior call – the RAS correctly predicts the return target regardless of how many call sites exist, as long as the RAS is deep enough to cover the maximum call depth in the program.

// Return Address Stack (RAS) -- 8 entries deep

localparam RAS_DEPTH = 8;

reg [31:0] ras_stack [0:RAS_DEPTH-1];

reg [2:0] ras_ptr; // stack pointer

// Detect JAL (call) -- push return address

wire is_jal = (if_instr[6:0] == 7'b1101111);

wire is_jalr = (if_instr[6:0] == 7'b1100111);

// Heuristic: jal x1/ra = call, jalr x0, 0(ra) = return

wire is_call = is_jal && (if_instr[11:7] == 5'd1); // rd = ra

wire is_return = is_jalr && (if_instr[11:7] == 5'd0) // rd = x0

&& (if_instr[19:15] == 5'd1); // rs1 = ra

always_ff @(posedge clk) begin

if (is_call) begin

ras_stack[ras_ptr] <= if_pc + 4; // push return address

ras_ptr <= ras_ptr + 1;

end else if (is_return && ras_ptr != 0) begin

ras_ptr <= ras_ptr - 1; // pop

end

end

wire [31:0] ras_top = ras_stack[ras_ptr - 1];

// JAL fast path: detect in IF and compute target immediately

wire [31:0] jal_target = if_pc + {{11{if_instr[31]}}, if_instr[31],

if_instr[19:12], if_instr[20],

if_instr[30:21], 1'b0};

wire [31:0] next_pc =

is_return ? ras_top : // RAS prediction for ret

is_jal ? jal_target : // JAL fast path

(btb_hit && pred_taken)? btb_target : // BTB + direction pred

redirect_from_id ? redirect_tgt : // late redirect

if_pc + 4; // sequential

Result:

- Cycles: 31,566,209 to 31,540,922

- CPI: 1.068363941 to 1.067508098

- CoreMark/MHz: 3.167944557 to 3.170484363

- Improvement over previous: +0.080%

The gain is small because CoreMark’s call structure is not deeply nested. But RAS correctness matters for programs with deeper or more varied call graphs.

The Complete Picture: From Baseline to Final

| Variant | CPI | CoreMark/MHz | Gain over previous |

|---|---|---|---|

| Baseline pipeline | 1.315809 | 2.572194 | baseline |

| + Early branch resolution | 1.212091 | 2.792296 | +8.557% |

| + 2-bit direction predictor | 1.132671 | 2.988083 | +6.547% |

| + BTB | 1.094809 | 3.091422 | +3.458% |

| + False hazard cleanup | 1.094781 | 3.091499 | +0.002% |

| + Store-data forwarding | 1.094643 | 3.091889 | +0.013% |

| + Generalised load forwarding | 1.073219 | 3.153613 | +1.996% |

| + Tournament predictor | 1.068363 | 3.167944 | +0.455% |

| + JAL fast path + RAS | 1.067508 | 3.170484 | +0.080% |

Total improvement from baseline: +23.26% in CoreMark/MHz, CPI reduced from 1.3158 to 1.0675.

CPI waterfall -- where the gains came from:

1.3158 baseline

-0.104 early branch resolution (biggest single win)

-0.079 2-bit direction predictor

-0.038 BTB

-0.000 false hazard cleanup

-0.000 store-data forwarding

-0.021 generalised load forwarding (biggest hazard win)

-0.005 tournament predictor

-0.001 JAL fast path + RAS

--------

1.0675 final

How Close Did This Get to Superscalar?

The superscalar implementation (dual-issue, in-order) run on the same binary gives:

- Cycles: 30,832,113

- CPI: 1.043518297

- CoreMark/MHz: 3.243371611

The final pipelined result:

- Cycles: 31,540,922

- CPI: 1.067508098

- CoreMark/MHz: 3.170484363

Gap: 3.243371611 - 3.170484363 = 0.072887 CoreMark/MHz, about 2.299%.

A single-issue pipeline, properly optimized, comes within 2.3% of a dual-issue superscalar on this benchmark. That is a striking result. The reason it is even possible is that the superscalar in this comparison is not heavily optimized. It can issue two instructions per cycle, giving it a theoretical IPC ceiling of 2.0, but its actual CPI is 1.043, meaning it is capturing only a small fraction of that theoretical advantage. The single-issue pipeline, by eliminating unnecessary stalls and predicting branches well, has squeezed its CPI down to where the gap becomes very small.

Theoretical vs actual IPC:

Superscalar theoretical max IPC: 2.000 (dual-issue)

Superscalar actual IPC: 0.958 (= 1/1.043)

Superscalar IPC utilisation: 47.9% -- barely using its width

Single-issue theoretical max IPC: 1.000

Single-issue actual IPC: 0.937 (= 1/1.0675)

Single-issue IPC utilisation: 93.7% -- nearly saturating

The optimised single-issue core is using 93.7% of its theoretical ceiling.

The unoptimised superscalar is using less than half of its own ceiling.

CoreMark is Not the Whole Story

CoreMark/MHz is the primary comparison metric used throughout this project, but it would be a mistake to treat it as a complete measure of processor performance.

CoreMark is loop-heavy by design. It runs the same operations many times over in tight loops. This is ideal for a branch predictor to learn. After the first few iterations, the 2-bit counters are in stable states, the BTB has all the targets cached, the RAS has the call depth pattern memorised, and almost every branch is predicted correctly. The benchmark rewards exactly the kind of steady-state loop prediction that this implementation optimizes for.

Real programs are not like this. A compiler, an operating system kernel, a server handling varied requests – these have highly irregular control flow. Different call paths on every request, data-dependent branches that have genuinely unpredictable outcomes, long stretches of code that execute rarely. A branch predictor that is extremely accurate on a tight loop does not automatically generalize to a program doing something different on every few hundred instructions.

CoreMark inner loop (conceptual structure):

for (i = 0; i < N; i++) { <- backward branch, loops N times

result += matrix_mul(...); <- predictable, same path every iteration

result ^= linked_list(...); <- same structure each time

result += state_machine(...);

}

// Branch predictor learns this pattern after 2-3 iterations

// and predicts correctly for all remaining N-3 iterations.

// This is the ideal scenario for a BHT/BTB setup.

Irregular program (e.g., a simple interpreter):

while (true) {

opcode = fetch_next();

switch (opcode) { <- highly variable branch target

case ADD: ... <- different path each instruction

case LOAD: ...

case JUMP: ...

// hundreds of cases, order unpredictable

}

}

// Branch predictor struggles -- next opcode depends on user input.

// This is where a single-issue pipeline's lower complexity

// (shorter critical path, potentially higher clock) helps.

For a single-issue pipeline running irregular, non-loop-heavy code where branch prediction matters less, the bottleneck shifts elsewhere. Here the pipeline shows a different advantage: shorter critical path. There is less logic between the fetch of an instruction and its completion. A deeply pipelined or wide superscalar implementation introduces significant extra logic at every stage – the fetch unit must fetch and align multiple instructions, the decode unit must handle instruction pair dependencies, the issue logic must check whether two instructions can safely execute in parallel by evaluating all inter-instruction dependencies for every candidate pair. The register file needs more read ports. The forwarding network, which in a single-issue design has a small number of sources and destinations, now has many more paths to consider.

All of this combinational logic adds gates. More gates mean longer propagation delay through the stage. Longer propagation delay means either a lower maximum clock frequency (the same clock period that worked for a single-issue pipeline may not be achievable for a wider design), or a longer pipeline with more stages to keep the critical path per stage manageable. Longer pipelines increase the branch misprediction penalty because more stages are in flight and must be flushed on a wrong prediction. The tradeoffs compound on each other.

Critical path comparison (conceptual gate depth):

Single-issue EX stage forwarding mux:

2 sources (EX/MEM, MEM/WB) -> 2:1 mux -> ALU input

Gate depth: ~2-3 gates for mux

Dual-issue EX stage forwarding mux (slot 0):

Sources: EX/MEM slot0, EX/MEM slot1, MEM/WB slot0, MEM/WB slot1

-> 4:1 mux -> ALU input

Gate depth: ~4-5 gates for mux

Four-wide EX stage forwarding mux (one slot):

Sources: 4 EX/MEM slots + 4 MEM/WB slots = 8 sources

-> 8:1 mux -> ALU input

Gate depth: ~6-7 gates for mux

Each additional layer of mux depth can push the stage's critical path

past the target clock period, forcing either pipeline lengthening

or clock frequency reduction.

An unoptimised superscalar with no forwarding improvements, no improved prediction, and no hazard handling work will not necessarily outperform a carefully optimised single-issue pipeline. The single-issue pipeline here has been brought to a CPI of 1.0675. A superscalar that is stalling constantly from data hazards or taking four-cycle branch misprediction penalties due to a longer pipeline will burn cycles that its extra issue width cannot recover.

This is not an argument against superscalar. Wide, out-of-order, speculative superscalar processors are categorically faster in throughput for workloads with instruction-level parallelism, and the performance advantage grows with issue width when implemented correctly. But that performance comes from deep investment in all the associated structures. An implementation that adds dual-issue but skips the rest is paying area and complexity cost without capturing the performance benefit.

What Happens If These Optimisations Are Applied to the Superscalar?

A reasonable question: the pipelined core improved by 23% through these techniques. Would the same techniques applied to the superscalar show similar gains?

The short answer is: some of them would help, some would not transfer cleanly, and the complexity of implementing all of them correctly scales non-linearly with issue width. There is no guarantee the same percentage gains repeat, but improvements would likely show.

Amdahl’s Law frames why the unoptimised superscalar sits only 2.3% ahead of the optimised single-issue pipeline despite having twice the issue width. A dual-issue core has a theoretical CPI floor of 0.5, but data dependencies prevent this in practice. If a fraction $d$ of cycles cannot dispatch two independent instructions, the effective floor is:

$$\text{CPI}_{\text{floor, dual}} = 0.5 + 0.5d$$For a dependency rate of $d \approx 0.3$, typical for integer workloads with tight register reuse, this gives $\text{CPI}_{\text{floor, dual}} \approx 0.65$. An unoptimised superscalar running well above this floor due to avoidable stalls offers no advantage that its extra issue width promised. Adding issue width shifts the ceiling; it does not reduce the overhead sitting between the actual CPI and that ceiling. That overhead must be addressed separately, which is exactly what the techniques in this post do for the single-issue case.

Hazard optimisation (false load-use stall removal). For a dual-issue superscalar, the hazard logic already has to handle inter-instruction dependencies between the two instructions being issued together in the same cycle, plus dependencies on the previous cycle’s outputs. Adding source-aware hazard detection is additional logic but structurally similar work. The gain would be small (it was +0.002% here) but the correctness improvement is real.

Generalised load-use forwarding. In a single-issue pipeline, the forwarding paths are between a small fixed set of pipeline registers and the EX stage inputs. In a dual-issue superscalar, you have forwarding needs across two instruction slots for both the current cycle’s pair and the previous cycle’s results. The number of forwarding mux inputs roughly doubles. The routing becomes more complex and the potential for a timing violation (the forwarded value arriving too late in the cycle) increases. The gain should still exist because unnecessary stalls are still wasted cycles, but verifying correctness of the full forwarding matrix for a dual-issue core is significantly more work and requires careful attention to which slot’s result is forwarding to which other slot’s input.

Forwarding matrix complexity scaling:

Single-issue (N=1 slot):

Check: prev_rd vs curr_rs1, curr_rs2

Paths: 2 (one per operand)

Dual-issue (N=2 slots):

Check: slot0_rd vs slot1_rs1, slot1_rs2 (intra-cycle)

slot0_rd, slot1_rd vs next_slot0_rs1/rs2, next_slot1_rs1/rs2

Paths: ~8 forwarding cases to reason about

Four-wide (N=4 slots):

Intra-cycle dependencies alone: 4*3 = 12 pairs to check

Cross-cycle dependencies: 4 producers * 4 consumers * 2 operands = 32 paths

Total: ~44 forwarding cases before simplification

Early branch resolution. Moving branch resolution to ID gave the largest single gain (+8.5%) in the single-issue case. In a superscalar, this interaction is more complicated. If a branch appears in the first issue slot, the second issue slot must know the branch outcome before it can safely be allowed to execute. If the branch comparator in ID can resolve before the second slot’s EX begins, the improvement carries over. If not, you may need to restrict the second slot when a branch is in the first, which partially offsets the gain. The benefit would still be positive but smaller relative to baseline.

Tournament predictor and BTB. These structures are indexed by PC and operate independently of issue width. The same predictor hardware can serve a superscalar fetch unit with minimal changes since the prediction is based on the branch’s PC, not on how many other instructions are being fetched alongside it. The gain would be similar in absolute terms. If the superscalar baseline also has poor branch prediction (which an unoptimised one would), improving the misprediction rate translates to roughly the same cycle savings per misprediction avoided.

RAS. This is also largely independent of issue width. The call and return pattern is the same regardless of how many instructions are issued per cycle. A well-implemented RAS on the superscalar would show similar benefit.

Rough estimate overall: applying these same techniques to the superscalar implementation would likely yield meaningful improvement over the current unoptimised superscalar, probably more improvement than the 2.3% gap between the current superscalar and the best pipelined variant. The superscalar has more room to fall in CPI through branch prediction improvement alone. But the complexity of implementing and validating the combined design is substantially higher than what was done here for the single-issue case. The hazard logic in particular, when done correctly for dual-issue, requires careful enumeration of all inter-slot and cross-cycle dependency cases. The implementation effort is not twice as hard – it is harder than that, because the number of interaction cases between slots grows combinatorially.

More issue width beyond dual-issue compounds this further. Four-wide superscalar needs forwarding paths between all four slots and across multiple prior cycles. The hazard detection matrix becomes large. The forwarding mux at each execution unit input has many more inputs. Timing closure (keeping the critical path short enough to hit a target clock frequency) becomes the primary design challenge, and any optimisation that adds combinational depth to an already-tight path can fail to synthesize at the target frequency even if it is logically correct.

What This Does Not Cover

A single post cannot cover everything. Some directions that were not explored here but are standard in the literature:

Out-of-order execution changes the problem entirely. Rather than a static pipeline with forwarding, an OOO core has a reorder buffer that tracks instruction status, a physical register file with renaming to eliminate false dependencies, and issue queues that can select any ready instruction rather than only the oldest. The performance ceiling is much higher but so is the implementation complexity.

Caches. This entire experiment was run with ideal memory. In a real implementation, cache misses introduce variable latency loads that the pipeline cannot predict or avoid. Much of what a real processor’s memory system does is manage cache miss penalties gracefully through non-blocking cache support and memory-level parallelism.

Multi-cycle execution units. Division takes many cycles. Floating point operations take many cycles. A real implementation with these units needs additional hazard handling for results that are not available for a fixed number of cycles.

Prefetching. Fetching instructions ahead of where the PC currently is, based on predicted control flow, hides fetch latency. This matters more when instruction cache misses are in play.

Summary

Starting from a CPI of 1.3158 on a plain five-stage pipeline, the following techniques reduced CPI to 1.0675, a 23.26% improvement in CoreMark/MHz:

- Moving branch resolution to the ID stage with forwarding support for the branch comparator: +8.557%

- Adding a 2-bit saturating counter direction predictor: +6.547%

- Adding a branch target buffer for target prediction at the IF stage: +3.458%

- Generalising the forwarding network to cover MEM-to-EX paths for load results: +1.996%

- Replacing the local predictor with a tournament predictor: +0.455%

- Adding a JAL fast path and return address stack: +0.080%

The final result is a single-issue pipeline that comes within 2.3% of an unoptimised dual-issue superscalar on CoreMark. The gap exists because the superscalar can, when dependencies allow, retire two instructions per cycle – something a single-issue design cannot do regardless of how well its pipeline is managed. But the optimisations here demonstrate how much of a pipelined core’s performance is not lost to the fundamental IPC ceiling, but to avoidable hazards and mispredictions that add up to a 30% CPI overhead above the theoretical minimum of 1.0.

This overhead has a precise Amdahl interpretation. The avoidable stall fraction of total execution time in the baseline is:

$$p = \frac{\text{CPI}_{\text{baseline}} - \text{CPI}_{\text{floor}}}{\text{CPI}_{\text{baseline}}} = \frac{1.3158 - 1.0}{1.3158} \approx 0.240$$The theoretical maximum speedup from eliminating all stalls within a single-issue design is therefore:

$$S_{\max} = \frac{1}{1 - p} = \frac{1}{0.760} \approx 1.316$$The realized speedup after all optimisations is $\frac{1.3158}{1.0675} \approx 1.233$, capturing roughly 73.7% of that available headroom. The residual 0.0675 CPI above the floor is the serial fraction no single-issue technique eliminates.

Removing that overhead, step by step, with real measurements at each stage, is what this project was about.