Vision Language Action Models in Robotics

Robotics has traditionally been built as a stack of separate components. A perception module detects objects. A planner decides what to do. A controller executes motion. Each block is designed, tuned, and debugged independently.

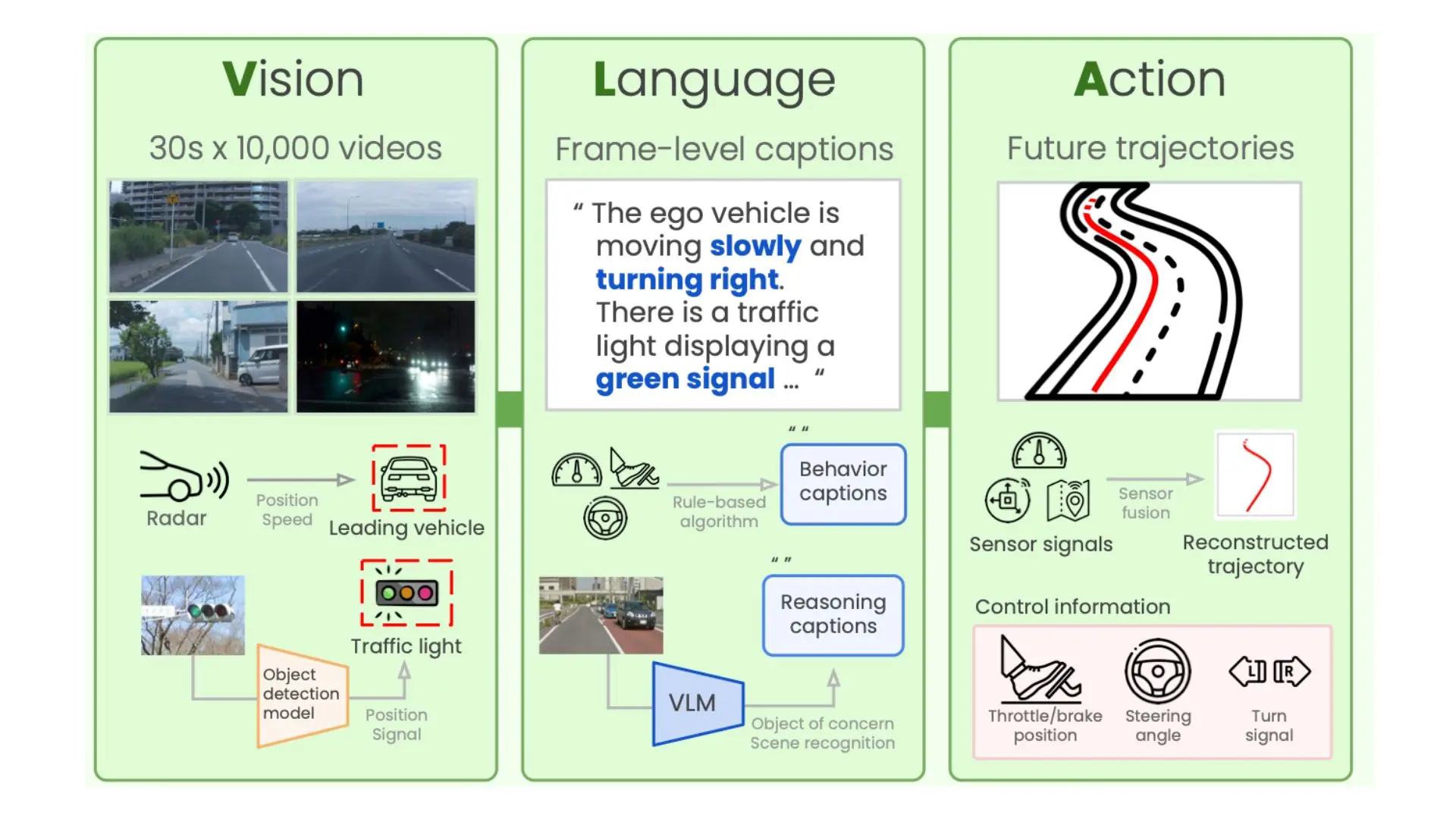

Vision Language Action, or VLA, models challenge that structure. Instead of splitting perception, reasoning, and control into separate systems, a single model takes visual input and a language instruction and directly outputs actions. The robot no longer needs a hand written planner or a task specific pipeline. The mapping from “what I see” and “what I am told” to “what I do” is learned.

This idea sounds simple, but it changes how robotic systems are built, trained, and scaled.

The Core Idea

At the heart of a VLA system is a learned policy that maps observations and a goal into actions. Formally, you can think of it as:

\[ a_t = f(o_t, g) \]

where:

- \( o_t \) is the observation at time \( t \), typically camera images plus robot state

- \( g \) is a language instruction or goal

- \( a_t \) is the action issued at that time step

Unlike classical robotic systems, there is no explicit symbolic planner sitting between perception and control. The reasoning is embedded in the model weights.

The model does not just recognize objects. It decides how to move.

How Traditional Robotics Differs

In a classical pipeline, the structure often looks like this:

- Vision system detects objects and estimates poses.

- A symbolic planner builds a sequence of steps.

- Motion planning computes trajectories.

- A controller executes the trajectory.

Each stage requires careful engineering. If perception fails, planning fails. If planning produces infeasible goals, motion planning fails. Debugging means tracing errors across modules.

VLA models compress much of this stack into a single trainable system. That does not eliminate complexity. It moves the complexity into data, model architecture, and training strategy.

What “Vision”, “Language”, and “Action” Really Mean

Vision

Vision input usually includes:

- RGB images

- Depth images

- Video sequences

- Sometimes segmentation masks

A vision encoder transforms raw pixels into embeddings. This encoder is often:

- A convolutional neural network

- A Vision Transformer

- A pretrained multimodal backbone

The output is a high dimensional feature vector representing scene content and spatial relationships.

Language

Language input can be:

- A task instruction such as “open the drawer”

- A goal description such as “stack the red block on the blue block”

- A correction such as “move slightly to the left”

A language encoder transforms text into embeddings. This is usually a transformer model trained on large text corpora.

The key idea is that language provides task conditioning. The same visual scene can produce different actions depending on the instruction.

Action

Action outputs vary by system. Common representations include:

- Joint position targets

- Joint velocity commands

- End effector pose deltas

- Gripper open or close signals

- Discrete action tokens

For continuous control, the model may output a vector:

\[ a_t \in \mathbb{R}^n \]

where \( n \) is the number of controlled degrees of freedom.

In practice, low level motor control is often still handled by classical controllers. The VLA model typically operates at a slightly higher level, predicting targets or velocities rather than raw torque.

Architectural Patterns

Most VLA models share a common high level structure:

- Vision encoder

- Language encoder

- Fusion mechanism

- Policy head

The fusion stage combines visual and linguistic features. Attention mechanisms are commonly used so that the model can align words like “red” or “left” with specific regions in the image.

A simplified flow:

Image --> Vision Encoder --\

--> Fusion --> Policy --> Action

Text --> Language Encoder --/

The policy head maps the fused representation to actions.

Some systems use autoregressive token prediction, where actions are treated like language tokens. Others use direct regression for continuous control.

Training Data: The Real Bottleneck

The quality and diversity of training data determine how capable a VLA system becomes.

Typical training samples contain:

- Observation frames

- A natural language description

- An expert action sequence

A common training objective is behavior cloning:

\[ \mathcal{L} = \sum_t | a_t^{pred} - a_t^{expert} |^2 \]

The model learns to imitate the expert.

However, robotic data collection is expensive. Real world demonstrations require:

- Physical hardware

- Human supervision

- Safety monitoring

Simulation helps scale data, but introduces a gap between simulated and real dynamics.

Scaling Across Tasks

One major motivation for VLA models is multitask learning. Instead of training separate controllers for each task, you train a single model across many tasks.

For example:

- Pick and place

- Drawer opening

- Tool use

- Object sorting

Language conditioning allows the same model to perform different behaviors. The instruction changes the goal without changing the architecture.

If trained on enough diversity, the model can generalize to novel combinations. It may never have seen “place the yellow block behind the cup,” but it understands “yellow,” “behind,” and “cup” separately.

Handling Long Horizon Tasks

Short tasks like picking up an object are manageable. Long tasks such as:

- Setting a table

- Folding laundry

- Assembling parts

require memory and planning across many time steps.

Some VLA systems incorporate recurrence or transformers with long context windows. The idea is to allow the model to remember previous states and reason about progress.

The policy still follows:

\[ a_t = f(o_{\leq t}, g) \]

where the model conditions on the history of observations up to time \( t \).

Interaction with Classical Control

Even in end to end systems, classical robotics is not fully removed.

Low level loops often run at high frequency, sometimes hundreds of Hertz. Large neural networks may not meet those timing constraints.

A common architecture is hierarchical:

- VLA model outputs a desired pose or velocity.

- A traditional controller ensures stable tracking.

This separation preserves stability while allowing high level flexibility.

Memory and Attention in Physical Interaction

Language often refers to spatial relationships:

- “to the left of”

- “behind”

- “on top of”

Attention mechanisms allow the model to focus on relevant regions of the image when processing certain words. This alignment between language tokens and visual patches is critical.

For example, when the instruction says “red block,” the model learns to assign higher attention weights to red regions in the image embedding.

This internal alignment replaces explicit symbolic grounding used in earlier robotics systems.

Benefits of the VLA Approach

Unified Learning

Instead of designing perception and planning separately, the model learns both jointly.

Improved Generalization

Language conditioning and large scale pretraining enable transfer across tasks and environments.

Simpler Interfaces

Humans can issue natural language instructions rather than structured commands.

Data Driven Improvement

Adding new tasks may require only additional demonstrations, not redesigning the architecture.

Limitations and Risks

Data Hunger

Large models require large datasets. Collecting sufficient robotic interaction data remains challenging.

Safety Concerns

End to end policies can produce unexpected behavior. In safety critical environments, guard layers and monitoring systems are necessary.

Real Time Constraints

Transformer based models can be computationally heavy. Running them on embedded hardware requires optimization.

Interpretability

Understanding why the model chose a specific action is difficult. Debugging often requires inspecting attention maps or intermediate embeddings.

Example Scenario: Language Guided Manipulation

Consider a robot arm in front of a cluttered table. The instruction is:

“Pick up the small green cup and place it next to the book.”

The VLA model processes the camera image and instruction.

At each time step:

\[ a_t = f(o_t, g) \]

The model:

- Identifies the green cup.

- Plans a grasp trajectory.

- Lifts the object.

- Locates the book.

- Places the cup next to it.

There is no explicit symbolic sequence like “grasp, lift, move, place.” The sequence emerges from the learned mapping.

Relationship to Foundation Models

Many VLA systems build on pretrained vision language backbones. These foundation models have already learned:

- Object categories

- Spatial relationships

- Language semantics

Fine tuning adds an action prediction head and robotic data. This leverages large internet scale datasets for perception and reasoning, reducing the need for purely robotic labeled data.

The result is a hybrid: internet scale perception combined with embodied interaction data.

Toward General Purpose Robots

The long term goal is a robot that can:

- Understand open ended instructions

- Adapt to new environments

- Learn continuously from interaction

VLA models are a step in that direction. They unify perception, language understanding, and action generation into a single trainable framework.

The core abstraction remains:

\[ \text{Observation} + \text{Instruction} \rightarrow \text{Action} \]

Everything else is architecture and data.

Final Thoughts

Vision Language Action models represent a structural change in robotics. Instead of carefully engineered pipelines, they rely on large scale learning to map what the robot sees and hears directly to what it does.

They do not remove the need for hardware expertise, control theory, or safety engineering. They shift emphasis toward:

- Data quality

- Model scaling

- Training stability

- System integration

As datasets grow and compute becomes more accessible, VLA models are likely to play a central role in building adaptable, instruction driven robots. The main open question is not whether they work in controlled settings, but how reliably they can operate in the messy, unpredictable real world.